In-depth analysis on Valorant’s Guarded Regions

Disclaimer⌗

This post is not meant to be an attack towards Riot Games’ Vanguard or Microsoft’s Windows, they have done an excellent job with their products and will continue to do so for the coming years, the content of this post is gathered solely by me, and I am not tied to any game hack publisher or entities. I have no intention of harming any company’s product, and everything here is constructed for educational purposes.

Introduction⌗

In the cutthroat world of online gaming, there is no greater threat to the sanctity of competition than cheating. From sophisticated aimbots to cunning wallhacks, cheaters have employed every trick in the book to gain an unfair advantage over their rivals. As a result, game developers are constantly pushing the boundaries of innovation to stay one step ahead of these nefarious tactics.

Enter Vanguard - Riot Games’ revolutionary anti-cheat system designed specifically for their popular tactical shooter, Valorant. In this article, we’ll delve into the intricacies of how Vanguard operates by analyzing its guarded regions. By gaining a deeper understanding of how this cutting-edge technology safeguards the gameplay experience in Valorant, players can truly appreciate the level of dedication and meticulousness that goes into ensuring fair play and balanced competition. Are you ready to discover the secrets behind Vanguard’s fortress?

The problem⌗

A game’s anti-cheat measures must take innovative measures in order to eliminate cheaters. The Vanguard team has deployed a very smart and innovative technique to protect game variables from being accessed outside of the game. As any cheater might tell you, VALORANT is a particularly challenging game to deal with due to its interference with cheating necessities like reading memory, while maintaining the integral aspect of low performance overhead. Any partial attempt to circumvent such mechanisms is typically met with an unavoidable system page fault. Most often than not, this spurs the confusion of cheaters leading to trivial and ineffective second-guessing of code.

Reversing the logic⌗

As someone who has delved into analyzing Vanguard, I had devised a plan to create a semi-emulator that could bypass many of its detection mechanisms. During my research, I came across an interesting Input/Output Control (IOCTL) command that was being sent from the game to Vanguard. At first, I assumed that it was an initialization command meant to inform Vanguard of the game’s presence. However, upon further investigation, I discovered that the command was actually directed to a large and complex function in stub.dll, which is the packer component of VALORANT. This module is responsible for protecting the game from potential threats by utilizing a variety of techniques, including binary encryption and more. As I studied the function, it became clear to me just how formidable Vanguard’s defenses truly were. It was a humbling experience to see firsthand the level of sophistication and complexity that had gone into protecting the game from cheaters. The function responsible for hiding memory looked like the following:

NOTE: This code is heavily edited and stripped to be readable.

uint64_t* InputBuffer = (uint64_t*)malloc(8); // Allocate 8 bytes for the input buffer.

*InputBuffer = __rdtsc(); // Write the TSC timestamp to the allocated input buffer.

// Input Buffer encryption block removed.

uint64_t* OutputBuffer = (uint64_t*)malloc(16); // Allocate 16 bytes for the output buffer.

memset(OutputBuffer, 0, 16); // Nullify the output buffer memory allocation.

// Call DeviceIoControl to request the shadow base from vgk, and also check if it

// failed, and if so, free the allocations and return a failure code.

if ( !DeviceIoControl(Data::VgkHandle, [REDACTED], InputBuffer, 8, OutputBuffer, 16, &BytesReturned, 0)

|| BytesReturned != 16 )

{

free(InputBuffer);

free(OutputBuffer);

return EPackmanStatus::VanguardFailure;

}

// Output Buffer decryption block removed.

// Write the shadow base retrieved from vgk to the 2nd argument passed to the function.

*Arg2_pShadowBase = OutputBuffer[1];

// Free the allocations previously created.

free(InputBuffer);

free(OutputBuffer);

return EPackmanStatus::Success;

After analyzing this, I became interested to see what exactly was being sent to stub.dll by VGK. So, I dumped the output buffer and proceeded to decrypt it, which led me to obtain this: 17 96 75 76 3E 29 00 00 00 00 00 00 80 00 00 00. We can format it further so we don’t just look at some random hex gibberish.

OutputBuffer[0] = 0x293E76759617 // TSC timestamp

OutputBuffer[1] = 0x008000000000 // Shadow base

After seeing this, my curiosity got the best of me and I wanted to investigate further by examining the second entry in the output buffer. It turned out to be the shadow base used for various game variables such as World, Engine, Names, and other objects. This led me to ponder how the game was accessing these invalid addresses in memory without crashing. One idea I had was that stub.dll created a memory allocation and wrote to it when certain whitelisted threads were accessing it by catching the page fault exception. However, this did not seem to involve VGK, so I refocused my attention on analyzing VGK. After a bit of digging, I finally found the IOCTL handler that dealt with this specific command, but upon initial inspection, it was difficult to comprehend.

// Sanity check to make sure only the game can access the variable below.

if ( IoGetCurrentProcess() != Data::GameProcess )

return 0;

// Shifting the PML4E index by 39.

return (uint64_t)Data::FreePML4EIndex << 39;

The function by itself doesn’t tell us much, unless you take a broader look at it by taking a look at the variables it references, which in our case looks something like the following:

NOTE: This code is heavily edited and stripped to be readable and more direct to remove any unneccesary code for the purpose of this blog post.

// Sanity checks to check if its executing under the game's context.

if ( PsGetThreadProcess(CurrentThread) != Data::GameProcess

|| __readcr3() != Data::GameCR3 )

return;

bool WriteToCR3 = true;

// Disabling interrupts, so that the scheduler doesn't switch cores.

_disable();

// Copying the content of the current game cr3 to the new cloned cr3.

memmove(Data::CloneVirtCR3, Data::VirtGameCR3, 0x1000);

Data::CloneVirtCR3[Data::FreePML4Index] = Data::ShadowPML4Value;

// Loop through the whitelisted threads array, and if the current running thread,

// is inside it, then allow cr3 overwrite.

for ( int ThreadIdx = 0; ThreadIdx < Data::ThreadCount; ThreadIdx++ )

{

WriteToCR3 = Data::ThreadArray[ThreadIdx] == CurrentThread;

if ( WriteToCR3 )

break;

}

DoTask:

// Writing the cr3 to the clone cr3.

if ( WriteToCR3 )

__writecr3(Data::CloneCR3);

// Flushing TLB by toggling CR4:PGE.

if ( CanFlushTLB )

{

uint64_t OriginalCR4 = __readcr4();

__writecr4(OriginalCR4 ^ 0x80);

__writecr4(OriginalCR4);

}

// Enabling interrupts.

_enable();

Breaking down complex code into smaller, understandable components is a great approach to gain insights into its workings. In this case, by breaking down the function’s logic, we can see that its purpose is to clone the game’s PML4 table and write its own entry onto a free slot in the table. This approach allows the game to access the shadow region from a whitelisted thread without any noticeable impact on performance. This is an ingenious solution that demonstrates the level of innovation and dedication that goes into developing an effective anti-cheat system like Vanguard.

Paging tables⌗

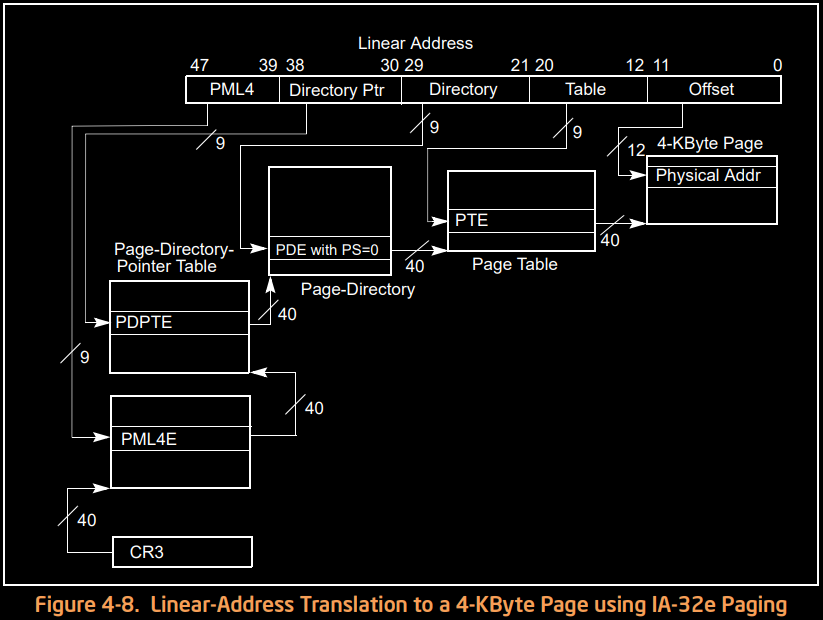

To understand this better, we’ll need to review how paging tables work. Fortunately, Intel provides documentation along with a diagram that can help us understand it better.

NOTE: The following explanation of paging tables is severely dumbed down, for new inexperienced readers. It is recommended to refer to the intel manual for a better explanation.

NOTE: This section of the post only talks about the 4 level paging table hierarchy, and ignores the 5 level one.

We can break down this diagram into more detail to understand how a virtual address can reference a physical address. We need to first break down each step. A linear address is essentially a bunch of indexes mashed together to create an address that the CPU can interpret and use to find what memory it’s referring to in the RAM. As seen in the diagram above, the linear address contains indexes for the following: PML4, PDPT (Page Directory Pointer), PD (Page Directory), PT (Page Table), and finally, the page offset.

Modern x86/64 processors contain a set of registers called Control Registers, the one which we are mostly interested in is the 3rd register in this set named CR3, this is important as it is used by the processor to locate the paging table hierarchy, and points to the physical address of the PML4 table along with some more information stored in certain bits, which is out of the scope for this post. Each table in the paging table hierarchy can contain a maximum of 512 entries, and the hierarchy goes as follows. The PML4 contains entries to the PDPT which is capable of containing a 1 gigabyte page or it can refer to another table named the PD which is able to contain 2 megabytes, or it can finally refer to the PT table, which can contain 4 kilobytes of memory per entry.

Knowing the basic structure of paging tables, we can go into some more details about it. For example, each table’s entry contains a set of flags to determine if the page can be written to, its caching type, if it’s executable or not, etc. The entry also includes a set of bits reserved for storing the page frame number (basically the physical address chopped up so the page offset is ignored). There is also a flag for determining if the page is considered to be a large page, referred to in the Intel manual as the “PS” bit. This bit is used to figure out whether to move on to the next hierarchy of tables by accessing the physical address provided or to just use it as the final physical address. Once the address is translated by the CPU, any access to that linear address while paging is enabled for that core will reflect the final physical address contained in the paging table’s entry.

Process isolation⌗

Let’s dive into the fascinating world of operating systems! Have you ever wondered how an operating system handles multiple processes and ensures that they don’t interfere with each other? It all comes down to paging tables. In short, every process has its own set of paging tables that isolate its memory from other processes. When you try to access another process’s memory, you’ll be met with an exception in your program.

But how does Windows execute code from multiple processes simultaneously? The answer lies in thread scheduling. This essential measure ensures that the process context is set correctly when a thread is executing. It’s a delicate balance, but it’s what keeps your computer running smoothly. It is also quite important for us and Vanguard to set the process’s cr3.

Basic implementation of the idea⌗

To implement this, we must first figure out how to shadow these paging tables, as modifying the KPROCESS->DirectoryTableBase will allow every other process to get the new paging tables, which is not what Vanguard or we want, as it defeats the purpose of having “hidden” memory. Hence, we must then move on to figure out how to intercept the thread scheduler’s context switch so we can only let certain “threads” have access to our secret hidden memory and not let any other thread have access. To figure this out, we must take a look at where the context swap is occurring. Luckily for us, Microsoft has public symbols for their kernel, so we can easily browse and locate functions responsible for context switches. After analyzing the kernel, I had found the function responsible for modifying the current paging table context, which to our convenience is called “SwapContext”.

⚠️ DISCLAIMER: I am aware that the code snippet may be hard to read due to the alignment, you are free to copy it to somewhere else to read it. Also, This code has been heavily stripped and modified for your ease.

bool SwapContext()

{

// These variables are stored in NV registers.

PKTHREAD NewThread = RSI;

PKTHREAD OldThread = RDI;

// Wait for the "new" thread to stop running.

if ( NewThread->Running )

{

while ( NewThread->Running )

_mm_pause();

}

NewThread->Running = 1;

NewProcess = NewThread->ApcState.Process;

if ( NewProcess != OldThread->ApcState.Process )

{

NewDirectoryBase = NewProcess->DirectoryTableBase;

if ( KiKvaShadow & 1 )

Prcb->KernelDirectoryTableBase = (NewDirectoryBase & 2) ? NewDirectoryBase | (1 << 64) : NewDirectoryBase;

__writecr3(NewDirectoryBase);

if ( KiKvaShadow & 1 && (NewDirectoryBase & 2) == 0 )

{

// Flush TB

CR4 = __readcr4();

__writecr4(CR4 ^ 0x80);

__writecr4(CR4);

}

}

OldThread->Running = 0;

return NewThread->ApcState.KernelApcPending ? (NewThread->SpecialApcDisable | NewThread->WaitIrql) : false;

}

This function basically performs the following actions in the scope of the post: First, it waits for the new thread to stop executing so that there won’t be two cores executing the same thread. Then, it gets the directory base (CR3) from the new thread’s process and sets the field in the PCRB responsible for storing things as global storage for the current executing processor. After that, it writes the current CR3 register with the new CR3 of the new process, then it proceeds to flush the TB.

The exploit.⌗

This aspect of the post is the most interesting one 👀. However, this part of the post has been rescheduled for later and will have its own separate post simply due to the content being too large to discuss in a merged blog post. Fortunately, the code for the re-implementation of shadow regions will be available now as a teaser 😄.

void SwapContextHook( )

{

if ( !WhitelistedThreads.Contains( KeGetCurrentThread( ) ) )

return;

memcpy( Paging::ClonePML4Virt, Paging::ClientPML4Virt, 0x1000 );

Paging::ClonePML4Virt[ Paging::FreePML4Index ] = Paging::ShadowPML4;

cr3 CR3 = cr3{ .flags = __readcr3( ) }; CR3.address_of_page_directory = Paging::CloneCR3Phys >> 12;

SetCR3( CR3 );

}

The snippet of code above shows our hook callback for intercepting context swaps, which allows us to modify the current address space. Our callback simply copies over the PML4 table to our clone table, and then we insert our own PML4 entry into the free slot, and finally, we set the new address space to our cloned one. This allows our “whitelisted” thread to have access to our custom paging tables and creates a virtual address hidden from every other thread.

To whitelist our thread we simply get the current thread and insert it into an array which we can check for in our hook. The finished code for this goes as follows:

case WhitelistThreadCTL:

{

// Insert the current thread to the whitelist thread array.

WhitelistedThreads.Insert( KeGetCurrentThread( ) );

// Fix up the clone table.

memcpy( Paging::ClonePML4Virt, Paging::ClientPML4Virt, 0x1000 );

Paging::ClonePML4Virt[ Paging::FreePML4Index ] = Paging::ShadowPML4;

// Set the new cr3 to the clone one.

cr3 CR3 = cr3{ .flags = __readcr3( ) };

CR3.address_of_page_directory = Paging::CloneCR3Phys >> 12;

SetCR3( CR3 );

// Basic logging.

DBG( "Whitelisted thread %d\n", PsGetCurrentThreadId( ) );

// To inform the client that everything went well.

*SystemBuffer = 0x1BADD00D;

break;

}

The code above requires the cr3 and paging tables to be setup, as when the execution returns to usermode, a context swap does NOT occur most of the time. As it mainly occurs right when a syscall instruction is executed, hence we must set it before it returns back. To initialize the current cr3 virtual address and free pml4 index, we must do the following:

case InitializeCTL:

{

// Clear the array of whitelisted threads, as only 1 process at a time is allowed.

WhitelistedThreads.Clear( );

// Read the cr3 and get the physical address of the pml4 table.

cr3 CR3 = { .flags = __readcr3( ) };

// Convert the physical address to a virtual address, though this is process respective.

// Meaning that this address is only valid on the current address space.

Paging::ClientPML4Virt = (pml4e_64*)MmGetVirtualForPhysical( PHYSICAL_ADDRESS{ .QuadPart = LONGLONG( CR3.address_of_page_directory << 12 ) } );

// walk the usermode range ONLY to find a free PML4 entry.

for ( int i = 0; i < 256; i++ )

{

if ( !Paging::ClientPML4Virt[ i ].flags )

{

Paging::FreePML4Index = i;

break;

}

}

// Write the free pml4 index to the system buffer, so the usermode is aware of it.

*SystemBuffer = Paging::FreePML4Index;

// Basic logging for us.

DBG( "Initialized paging for process %d\n", PsGetCurrentProcessId( ) );

break;

}

For the usermode code to test this proof of concept out, I wrote this simple program which basically used IsBadReadPtr to safely test if the memory is accessible or not. The code for the usermode goes as follows:

int main( )

{

printf( "[*] Yumekage Usermode Demo\n\n" );

// Initialize the communication with the driver.

if ( !Comm::Initialize( ) )

{

printf( "[-] Failed to initialize comms.\n" );

Sleep( 5000 );

return 0;

}

printf( "[+] Initialized comms.\n" );

// Get the hidden page's address.

uint64_t Address = Comm::InitializeHiddenPages( );

if ( !Address )

{

printf( "[-] Failed to initialize hidden pages.\n" );

Sleep( 5000 );

return 0;

}

printf( "[+] Hidden pages created at 0x%llX\n", Address );

// Use IsBadReadPtr to validate the hidden page.

printf( "[*] Trying to access page before whitelisting: %s\n", IsBadReadPtr( (void*)Address, 1 ) ? "Failed" : "Success" );

// Whitelist the current thread.

if ( !Comm::WhitelistCurrentThread( ) )

{

printf( "[-] Failed to whitelist thread.\n" );

Sleep( 5000 );

return 0;

}

// Use IsBadReadPtr to validate the hidden page.

printf( "[*] Trying to access page after whitelisting: %s\n", IsBadReadPtr( (void*)Address, 1 ) ? "Failed" : "Success" );

// Show that the address is able to be read and written to.

for(int i = 0; i <= 5; i++ )

{

Sleep( 50 );

// Force the compiler to not optimize this out and reference 'i' for our print by using "volatile".

*(volatile int*)Address = i;

printf( "[*] Read and written index %d\n", *(volatile int*)Address );

}

printf( "[*] Done exitting...\n" );

// Disconnect from the driver.

Comm::Destroy( );

Sleep( -1 );

return 0;

}

If everything is setup correctly, we should be able to create a hidden memory range that should only be accessible from our thread, and as shown in the gif below, it is evident that we are unable to access the shadow memory range, unless we whitelist our thread.

The logs in windbg look like the following.

Back to Vanguard and VALORANT⌗

Before I had analyzed any of this logic, I would always see people talking about “bypassing” the guarded/shadow regions tactic by walking through the big pool table to find the memory allocation setup for the shadow page, now personally I find this quite stupid as this can be broken by very simple tactics and can even provide false positives as most cheats and public code available only check if the memory allocation size is 2mb and the pool tag is ‘TnoC’ which is the tag used for memory allocated by MmAllocateContiguousMemory. The problem with this doesn’t only end in false positives, as this method can be quite slow for any usable cheat as it requires the user to constantly have to call their driver to read (assuming the person can only read memory by calling their kernel module). Another fault is that cheaters have been hardcoding the shadow base (PML4 entry) since release, as Vanguard proceeds to use the first free PML4 entry available, which usually is either 1 or 2. However this is exactly what breaks their entire logic, as a simple modification like the one below inside vgk can break most cheats and not have any sort of compatibility issues when used in a mass scale.

/*

* Finds a random free PML4 entry.

*/

bool FindFreeIndex( _In_ pml4e_64* PML4, _Out_ int* FreeIndexOut )

{

// Basic sanity checks.

if ( !PML4 || !FreeIndexOut )

return false;

int FreeIndexes[ 256 ];

int NumOfFreeIndexes = 0;

for ( int i = 0; i < 256; i++ )

{

// Skip entries which are non zero, as it means it's used.

if ( PML4[i].flags )

continue;

// Store the index in the array.

FreeIndexes[ NumOfFreeIndexes++ ] = i;

}

// If no free entry was found, return false. This usually should not happen.

if ( !NumOfFreeIndexes )

return false;

// Get a random entry in the free index array and write to FreeIndexOut with it,

// and return true to indicate that everything went well.

// You are free to change the random method to anything else other than using

// the TSC timestamp, but at the end it does not make a difference.

*FreeIndexOut = FreeIndexes[ __rdtsc( ) % (NumOfFreeIndexes + 1) ];

return true;

}

Another modification that can be made by the Vanguard team is to remove the pool entry entirely, so that scanning the big pool table to find the memory allocation will not be possible.

/*

* Removes the pool entry for the allocation specified.

* Disclaimer: Very risky and unsafe, if something went wrong you will bugcheck!

*/

bool RemovePoolEntry( _In_ void* Allocation, _In_ POOL_TYPE Type )

{

// Statically store the ExRemovePoolTag pointer so we don't have to search for it every call.

static void(*ExRemovePoolTag)(_In_ void* Alloc, _Out_ uint32_t* PoolTag, _Out_ uint64_t* Size, _In_ POOL_TYPE Type) = 0;

if ( !ExRemovePoolTag )

{

// Signature scan for ExRemovePoolTag in ntoskrnl.

uint64_t Addr = Utils::FindPattern( KernelBase, "\xE8\xCC\xCC\xCC\xCC\x4C\x8B\x4D\xCC\x49\x81\xF9\x00\x10\x00\x00" );

// If not found, return false.

if ( !Addr )

return false;

// Resolve reference.

ExRemovePoolTag = decltype(ExRemovePoolTag)(Addr + *(int*)(Addr + 1) + 5);

}

uint32_t PoolTag = 0;

uint64_t Size = 0;

// You need to reference the pooltag and size as its not optional,

// hence supplying a null pointer will lead to a bugcheck.

ExRemovePoolTag( Allocation, &PoolTag, &Size, Type );

return true;

}

The code snippet provided above may be effective in breaking the logic of many cheats from searching the big pool table. However, it comes with some risks and drawbacks. As noted in the comments, the function is very risky to call and can lead to bugchecks if supplied with invalid arguments or if any other issues arise. Additionally, removing the pool entry for the allocation can lead to an inability to free the allocation and will cause a bugcheck. While this approach may be suitable for Vanguard’s allocation of a single 2mb page as in the modern day it means almost nothing, but it is not a recommended way to handle allocations.

Although in conclusion there are plenty of methods to break cheats, many of them are unsuitable for use in production as it may have issues when used in a mass scale. However there are some tactics which can be used to harden existing tech from being abused and defeated which allows the Vanguard team to spend more time on things which are more important instead of wasting time. However as the normal day goes in a company, things go quite slowly.

Proof of concept source⌗

Yumekage is a demo proof of concept showcasing how to create “hidden” memory for specific threads - https://github.com/Xyrem/Yumekage